The Problem: Fences Hold Factories Back

In most factories today, robots work behind physical barriers — fences, cages, light curtains. The moment a person steps too close, the robot stops. It’s safe, but it’s also rigid. These barriers waste valuable floor space, slow down workflows, and make it impossible for humans and robots to truly work side by side.

The Cynergy4MIE project is working to change that. The goal: remove the fences and replace them with something smarter — a system that always knows where every person is.

Our Approach: Seeing Through Many Eyes

Instead of physical barriers, we use multiple cameras positioned around the workspace, each covering the area from a different angle. An AI model (a neural network called YOLO) analyzes every camera feed in real time, detecting each person visible in the frame.

But detecting people in camera images is only the first step. The system then projects each detection onto a floor plan — converting pixel positions into real-world coordinates in meters. Since multiple cameras often see the same person from different viewpoints, a fusion algorithm combines all observations into a single, consistent map.

The result: a live bird’s-eye view of the workspace showing exactly where every person is, updated several times per second. Each person receives a persistent identity — the system doesn’t just see “a person,” it knows it’s the same person moving through the space.

To make tracking even more robust, the system can also fuse camera data with workers authorization, based for example on Ultra-Wideband (UWB) or RFID. Small wireless tags worn by workers authorize or even localize them, which is combined with the camera-based tracking. If realized by UWB, this adds a layer of redundancy — if a person is temporarily hidden from all cameras, the UWB signal can keep their position updated until they become visible again.

Testing in a Virtual Factory

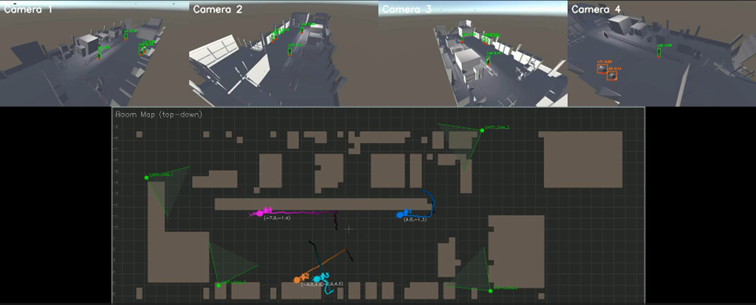

Before installing real cameras, we needed a way to develop and test the tracking system quickly and safely. So we built a virtual factory — a 3D simulation of an industrial workspace, complete with walls, obstacles, and animated people walking around.

The virtual cameras in this simulation stream video to our tracking system in exactly the same way real cameras would. This means we can experiment freely: try different camera placements, add more people, rearrange obstacles — all without touching any hardware.

The video below shows the system in action. On the top, you can see the four camera views with detected people highlighted. On the bottom, the room map displays each person’s tracked position in real time as colored dots with motion trails.